Emerging Technologies in Acute Pain Medicine

The challenge of effective acute pain management looms large in American society in light of the opioid crisis. Scientists and innovators are advancing new pharmacologic agents (eg, neosaxitoxin) and procedural modalities (eg, percutaneous nerve stimulation) to provide effective non-opioid options. However, nonpharmacologic and nonprocedural technologies continue to evolve as well, and they are poised to usher in new frontiers of acute pain medicine. We believe three such technologies will impact acute pain medicine in the years to come: virtual reality, mixed-reality simulation, and artificial intelligence.

Virtual Reality

Virtual reality (VR) is an interactive, realistic, three-dimensional (3D), computer-generated experience that uses visual, auditory, perceptual, and even possibly olfactory senses for participants to completely immerse themselves in a different world.[1] While wearing a small headset, a user can completely engross in a different reality and engage their focus on something other than actual circumstances. Initially used for simulation, entertainment, and desensitization to phobias, now its applications include mitigating painful and anxiety-provoking experiences during the perioperative period and rehabilitation and minimizing the psychosocial component of pain.

“Nonpharmacologic and nonprocedural technologies . . . are poised to usher in new frontiers of acute pain medicine.”

Pain perception in the brain cortex affects attention, emotion, memory, and the senses. Research has suggested that VR influences the interplay of pain pathways and consequently how the cortex ultimately perceives painful stimuli.[2] By interrupting attention toward painful stimuli, VR helps participants perceive the stimulus as less painful. Similar to cognitive behavior therapy, VR can change subjects’ emotional association with painful stimuli, improving the thought process and coping skills for the situation.[3] When consciousness is transferred away from painful stimuli, VR analgesia is produced.[2],[4]

Investigation with functional magnetic resonance imaging shows decreased brain activity in areas corresponding to experimental thermal pain stimulation, and like what is seen with opioids, reduced activity in the insula and thalamus.[5] In the clinical setting, VR has been most effective in providing analgesia for frequent but short painful procedures, such as burn dressing changes.[1],[6] In addition, evidence shows for periodontal procedure analgesia, phantom limb pain, fibromyalgia, and complex regional pain syndrome, as well as minimizing periprocedural sedation.[2],[7–9]

With our current understanding of the technologic advancements in medicine and multimodal approaches to pain management, VR can potentially have an expanding role in health care. VR is now inexpensive and can be linked to smartphones, making it easily accessible. Future applications include its use as an adjunct to biofeedback in the multimodal treatment of pain. VR may also be incorporated in perioperative rehabilitation and physical therapy and used to decrease the need for periprocedural sedation. Drawbacks are minimal but include motion sickness and time for coaching. Given the lack of significant barriers, VR can be considered in a multimodal analgesic regimen when devising plans to minimize opioid use and improve patients’ experiences.

Mixed-Reality Simulation

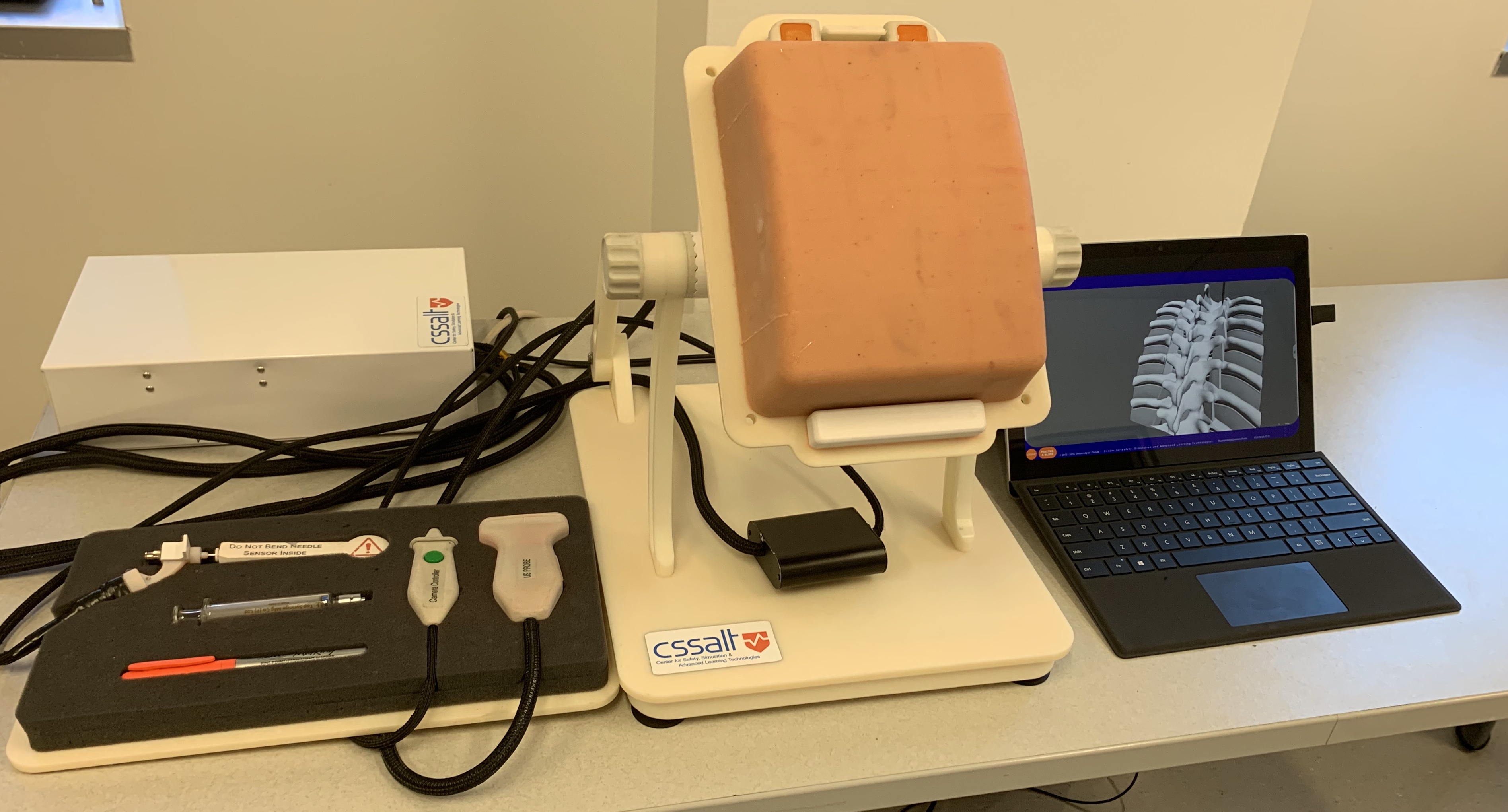

The mixed-reality simulator for thoracic epidural and paravertebral blocks is an educational tool that incorporates both a physical high-fidelity model of the thoracic spine with VR imaging of the anatomy deep to the skin (Figure 1). In this simulator, learners can perform complex blocks using common endpoints (loss of resistance, hydrolocation) while still being able to correlate the ultrasound images and needle trajectories with the underlying anatomy. For example, when an ultrasound image is acquired in axial view, the mixed-reality simulator will show the insonating beam as a semitransparent red plane on a 3D representation of the underlying anatomy. This assists learners in understanding what structures they visualize on ultrasound and how different manipulations of the ultrasound probe alter the structures visualized. In addition, as a physical needle is advanced on the thoracic spine physical block, a virtual image of the needle appears on the 3D anatomic image so that the exact location of the needle tip and shaft can be identified during needle advancement. Both the endpoints and complications (eg, inadvertent dural puncture, pneumothorax) are realistically calculated into the model.

Figure 1: Mixed-reality simulator

Currently, the simulators are being used for independent learning of midthoracic paravertebral and epidural block techniques, fully equipped with:

- Prompts on a practical approach to landmark-based, ultrasound-assisted, and ultrasound-guided thoracic epidural and paravertebral blocks

- In-depth anatomy lessons through comprehensive slide presentations on thoracic paravertebral and epidural anatomy, with 3D representation from different perspectives

- Guidance on ultrasound image acquisition and interpretation

- Tailored feedback

The procedures are broken into multiple steps to focus learners in minimizing common pitfalls and errors that accompany routine tasks (eg, determining correct vertebral level for placement, optimal image acquisition, precise in-plane needle advancement). Future directions would be to incorporate more challenging and realistic spinal anatomy as well as extending the simulator to include fascial plane blocks.

Artificial Intelligence

From the perspective of health care applications and research, artificial intelligence (AI) generally refers to analytical AI whereby algorithms are taught a cognitive representation of the world around us. More formally, when researchers discuss analytical AI, they often refer to machine-learning algorithms that identify patterns and inferences among data. From the patterns, the algorithms develop generalizations to organize unlabeled data (eg, unsupervised learning) or to understand how features associate with labels in training data to predict unlabeled observations based on the features of those observations (eg, supervised learning).

For structured, organized data, many machine-learning approaches are comparable to traditional statistical techniques.[10] For instance, introductory lessons on machine-learning classification algorithms compare them to logistic regression. Indeed, for well-organized and structured data that fulfills the requisite assumptions for statistical techniques, standard statistical methods offer important advantages over machine-learning algorithms. For example, with an outcomes research question that uses electronic health records data, a researcher identifies an outcome of interest (eg, 30-day mortality), considers features that may be associated with the outcome (eg, age, sex, American Society of Anesthesiologists status, block type), and uses a statistical function to measure the weight of each feature or parameter in association with the outcome. These advantages emphasize inference and interpretability and quantifiably measure magnitude and confidence in how the features relate to the outcome of interest.

Unfortunately, not all data are organized and structured in a manner suitable for traditional statistical approaches. Moreover, many interesting problems violate the requisite assumptions for traditional statistical testing or trade mechanistic understanding for prediction accuracy. For example, using a two-dimensional image to predict the risk of an outcome involves significant information that can be difficult to access. Information exists in the intensity of each pixel, but also on the relationship between nearby pixels, between distant pixels, and in the broader shapes comprised by individual pixels. Given the differences in data structures and the organization of latent information they contain, classifying the needle location on an ultrasound image as either “safe” or “dangerous” may be quite challenging for logistic regression but perhaps relatively straightforward for machine-learning techniques such as a deep convolutional neural network. Machine learning offers important advantages when using messy data or addressing nontraditional problems.

Machine learning has many potential indications in acute pain medicine. Physicians can use machine-learning classifiers to improve the prediction of pain-related outcomes following surgery through larger and more complex electronic health record datasets. Another exciting advance driven by deep learning is the potential for real-time annotation of ultrasound images to denote regions of interest, such as the location of a nerve or warning of a compressed vein. Interest is also growing in using machine-learning approaches for facial recognition as a pseudo-objective marker of pain intensity, an opportunity that is perhaps especially needed for at-risk populations such as neonates, patients with advanced dementia, and other patients with limited communication.

The utilities of statistics, outcomes research, and machine learning are all limited by our ability to apply the knowledge in a way that offers holistic patient benefits. Despite the increasing attention to machine learning and AI, we must remember that our patients are more than their data.

Conclusion

Virtual reality, mixed-reality simulation, and artificial intelligence are three technologic advancements that will change how we approach patient management and augment the treatment of acute pain in the future. More research and development are required for each modality before they realize broad application; however, the potential for gentler, safer, and more effective acute pain management is on the horizon.

References

- Hoffman HG, Chambers GT, Meyer WJ 3rd, et al. Virtual reality as an adjunctive non-pharmacologic analgesic for acute burn pain during medical procedures. Ann Behav Med. 2011;41(2):183–191. https://doi.org/10.1007/s12160-010-9248-7

- Li A, Montano Z, Chen VJ, Gold JI. Virtual reality and pain management: current trends and future directions. Pain Manag. 2011;1(2):147–157. https://doi.org/10.2217/pmt.10.15

- Gold JI, Belmont KA, Thomas DA. The neurobiology of virtual reality pain attenuation. Cyberpsychol Behav. 2007;10(4):536–544. https://doi.org/10.1089/cpb.2007.9993

- Sharar SR, Carrougher GJ, Nakamura D. Factors influencing the efficacy of virtual reality distraction analgesia during postburn physical therapy: preliminary results from 3 ongoing studies. Arch Phys Med Rehabil. 2007;88(12 Suppl 2):S43–S49. https://doi.org/10.1016/j.apmr.2007.09.004

- Hoffman HG, Richards TL, Van Oostrom T. The analgesic effects of opioids and immersive virtual reality distraction: evidence from subjective and functional brain imaging assessments. Anesth Analg. 2007;105(6):1776–1783. https://doi.org/10.1213/01.ane.0000270205.45146.db

- Das DA, Grimmer KA, Sparnon AI, et al. The efficacy of playing a virtual reality game in modulating pain for children with acute burn injuries: a randomized controlled trial [ISRCTN87413556]. BMC Pediatr. 2005;5(1):1. https://doi.org/10.1186/1471-2431-5-1

- Furman E, Jasinevicius TR, Bissada NF, et al. Virtual reality distraction for pain control during periodontal scaling and root planing procedures. J Am Dent Assoc. 2009;140(12):1508–1516. https://doi.org/10.14219/jada.archive.2009.0102

- Keefe FJ, Huling DA, Coggins MJ, et al. Virtual reality for persistent pain: a new direction for behavioral pain management. J Pain. 2012;153(11):2163–2166. https://doi.org/10.1016/j.pain.2012.05.030

- Pandya PG, Kim TE, Howard SK, et al. Virtual reality distraction decreases routine intravenous sedation and procedure-related pain during preoperative adductor canal catheter insertion: a retrospective study. Korean J Anesthesiol. 2017;70(4):439–445. https://doi.org/10.4097/kjae.2017.70.4.439

- Bzdok D, Altman N, Krzywinski M. Points of significance: statistics versus machine learning. Nat Methods. 2018;15:233–234. https://doi.org/10.1038/nmeth.4642

Leave a commentOrder by

Newest on top Oldest on top